Your CLI Has a New Super User

And it doesn't start by reading your help text

Ryan Snodgrass

Something unexpected is transforming developer tools. For decades, CLIs were built assuming human users — people reading help text, skimming documentation, making predictable mistakes. That assumption no longer holds true.

A new category of user has emerged: coding agents operating in loops, generating plausible commands without reading documentation, hallucinating flags, and exploring interfaces aggressively as black boxes rather than cautiously like humans.

The Core Insight: Desire Paths

Steve Yegge's concept of "Agent UX" captures this phenomenon perfectly. By observing what agents attempt to do with tools, developers can identify desired features and implement them. This mirrors the "desire paths" phenomenon on university campuses — dirt trails that appear where people actually want to walk, not where planners intended.

Agents carve similar paths through CLIs. They might try ox agent-list instead of ox agent list, assume --format=json exists, or invent commands like deploy --env staging. These aren't irrational hallucinations — they reflect reasonable assumptions about how good software should behave.

Friction as Product Signal

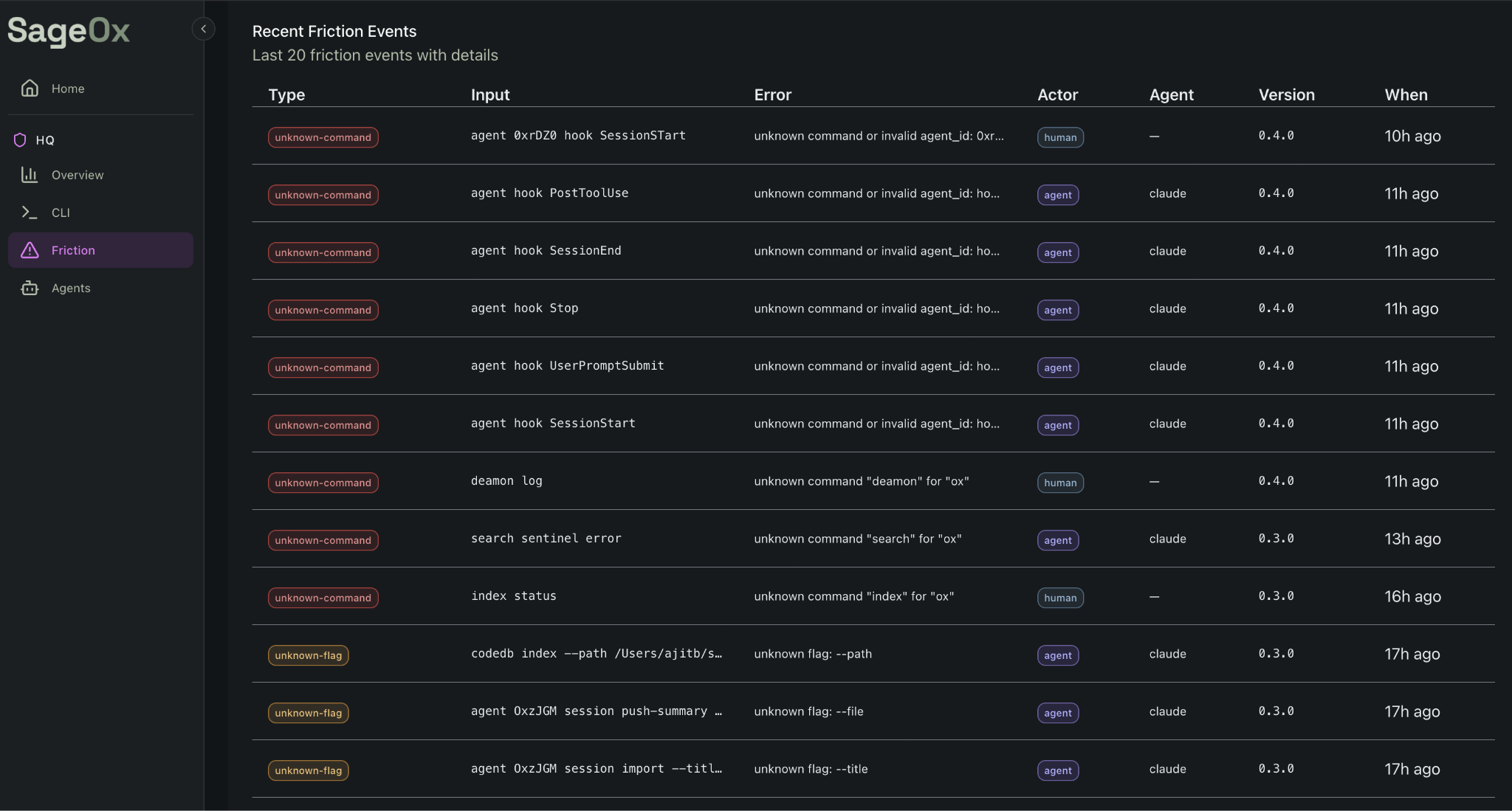

Rather than treating agent errors as failures, forward-thinking teams can view them as valuable data. This observation led to the development of FrictionAX, an open-source Go library that intercepts failed commands and treats errors as learning opportunities.

FrictionAX operates through three mechanisms:

- Command Correction: Using a three-tier suggestion system (learned corrections, token-level fixes, Levenshtein distance matching)

- Structured Response: For agents, it emits JSON metadata explaining the canonical command format

- Telemetry Collection: Capturing friction events across user bases to identify patterns

The library distinguishes between human and agent users, providing "did you mean?" suggestions to humans while offering structured JSON guidance to agents for immediate, in-context learning.

The Friction Dashboard: Product Development Reimagined

At SageOx, aggregate friction data revealed unexpected feature opportunities. When agents repeatedly attempted a command like ox agent OxFxV0 query "Team discussion...", the team didn't dismiss it as hallucination — they built it as a feature. Team context search shipped within seven days; codebase search followed three days later.

This represents a fundamental shift: "Intelligence is abundant and getting cheaper. Judgment is scarce and getting more valuable." Friction dashboards amplify human judgment by surfacing significant patterns from thousands of agent interactions.

AgentX: Agent-Aware Infrastructure

Complementing FrictionAX, AgentX enables CLI tools to recognize which agent is calling (Claude Code, Cursor, Windsurf, etc.), what capabilities it possesses, and how to format output it can parse. A single agentx.Init() call propagates agent information across entire tool pipelines through an AGENT_ENV variable.

Design Philosophy: Accept Everything, Expose Nothing

A counterintuitive principle emerges: accept all hallucinated commands but never expose them in --help. If code query works but remains hidden in documentation, agents learn the canonical approach without polluting the interface. Hidden Cobra commands silently redirect while keeping help text clean.

This design principle — generous in functionality, opinionated in teaching — represents a philosophy applicable across agent-aware tooling.

The Missing Category: Agent Experience Platforms

Just as PostHog instrumented web product development, a new category of "Agent Experience (AX) tooling" is emerging. These platforms answer critical questions: Which commands do agents try that don't exist? Where do multi-step workflows break? How do different agents behave differently?

The team building this category for the agentic era will achieve what PostHog accomplished for web analytics: become billion-dollar companies by making invisible phenomena visible and actionable.

Traditional developer tools assume human users reading sequentially, learning through exploration, remembering imperfectly. Agents consume context windows, learn through correction, and hallucinate plausible interfaces. As agents become primary CLI users faster than most realize, the teams that instrument agent feedback loops will build tools that improve themselves — while others install metaphorical "Keep Off the Grass" signs.

Resources: FrictionAX on GitHub | AgentX on GitHub | SageOx